Don’t Settle for Eventual: Scalable Causal Consistency for Wide-Area Storage with COPS

Wyatt Lloyd, Michael J. Freedman, Michael Kaminsky, David G. Andersen

Proc. 23rd ACM Symposium on Operating Systems Principles

(SOSP ’11) Cascais, Portugal, October 2011.

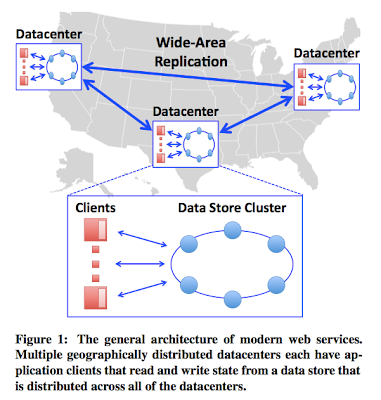

Geo-replicated, distributed data stores are all the rage now. These stores provide wide area network (WAN) replication of all data across geographic regions in all the datacenters. Geo-replication is very useful for global-scale web-applications, especially social networking applications. In social networking, your updates to your account (tweets, posts, etc) are replicated across several regions because you may have followers there, and they should get low-latency access to your updates. The paper refers to properties desired of such a geo-replication service with the acronym ALPS (Availability, low Latency, Partition-tolerance, and high Scalability).

Of course, in addition to ALPS, we need to add some consistency requirement to the geo-replication service, because otherwise the updates to a record can arrive at replicas in different/arbitrary orders and expose inconsistent views of the updates to the record. The eventual consistency property that Dynamo advocates is too weak for the programmer to build on to provide other services. The inconsistent views of the updates can escape and poison those other services. On the other hand, strong-consistency (linearizability) is impossible due to CAP. So, causal consistency is what the COPS paper shoots for. Actually, a later paper shows that this is the best you can get under these constraints.

Yahoo!’s PNUTS provides per-record timeline consistency (or per-key sequential consistency), which is a pretty good consistency guarantee. All the writes to a record are serialized by the per-record-primary server responsible for that record and these updates are replicated in the same order (the order the per-record primary determines) to the other replicas. PNUTS, however, does not provide any consistency across records/keys. Achieving such a consistency (more specifically, causal consistency) across records/keys introduces scalability challenges and is the aim of this paper, as we explain in the rest of this summary. (Spoiler: To achieve scalability, instead of using vector clocks, COPS explicitly encodes dependencies in metadata associated with each key's version. When keys are replicated remotely, the receiving datacenter performs dependency checks before committing the incoming version.)

Causal consistency model

Dave Anderson explains the causal-consistency model nicely in his blog post.Consider three updates by a single thread: write A=1, write B=dog, write B=cow, write A=2.

In Dynamo, the order at which these updates are applied is unconstrained. In a

remote datacenter, read(A), read(A) might return A=2, A=1

In PNUTS, the value of “A” could never go backwards: A=1, A=1, … A=2, A=2, …

But in both Dynamo and PNUTS, the relative values of A and B are unconstrained, and each of these systems could read(A), read(B): {2, dog}. Even though the originating thread set B=cow before it set A=2.

In COPS, this latter read sequence could not happen. The allowable reads are: {1, }, {1,dog}, {1,cow}, {2, cow}

In all three systems (in PNUTS, using Read-any), concurrent updates at different datacenters could cause conflicts that invoke conflict handlers, and in this way the three systems are not different.And this brings us to the issue of conflict handling in COPS.

Convergent conflict handling

In causal consistency you can still have conflicts for concurrent events. (Inherent in a distributed system, two nodes may start updates concurrently, and this leads to a "logical" conflict as both updates are uninformed of each other.) For resolving these and to achieve converge at all datacenters, COPS employs convergent conflict handling, which refers to using an associative and commutative handler. Thomas's last-write-wins rule satisfies this constraint and is used by default, or developer can write application-specific conflict-resolution rules provided that they are convergent.More concretely, convergent conflict handling requires that all conflicting puts be handled in the same manner at all replicas, using a handler function h. This handler function h must be associative and commutative, so that replicas can handle conflicting writes in the order they receive them and that the results of these handlings will converge (e.g., one replica’s h(a, h(b, c)) and another’s h(c, h(b, a)) agree).

The paper coins the term "causal+" to refer to causal-consistency plus convergence conflict handling together.

Scalability problems in causal+ model

Previous causal+ work (Bayou, Practi) was not scalable, because they achieved causal+ via log-based replay. Log-exchange-based serialization inhibits replica scalability, as it relies on a single serialization point in each replica to establish ordering. Thus, causal dependencies between keys are limited to the set of keys that can be stored on one node, or alternatively, a single node must provide a commit ordering and log for all operations across a cluster.Inner-workings of COPS

A get operation for a record is local at the closest datacenter and is non-blocking. Since all data is replicated at each datacenter, we can have local get.A put operation for a record is

1) translated to put-after-dependencies based on the dependencies seen in this site

2) queued for asynchronous replication to other sites/replicas

3) returns done to the client at this point (early reply)

4) asynchronous replication to other sites/datacenters occur.

Each operation maintains dependencies for operations. Replication dependencies are checked at each datacenter, and when they are satisfied the value is updated there.

Nodes in each datacenter are responsible for different partitions of the keyspace, but the system can track and enforce dependencies between keys stored on different nodes. COPS explicitly encodes dependencies in metadata associated with each key’s version. When keys are replicated remotely, the receiving datacenter performs dependency checks before committing the incoming version. The paper shows that by only checking the nearest dependencies you can reduce the number of dependency checks during replication and have a faster solution.

Subleties in definition of availability for put operation

The availability of get and put operations are defined at the datacenter scope and not at the whole system scope. The paper defines availability as: "All operations issued to the data store complete successfully. No operation can block indefinitely or return an error signifying that data is unavailable."What this means is that the availability of put is satisfied at step 3 when early reply is returned to the client of the datacenter. However, the replication of this put operation to other datacenters in fact can be blocked until put-after-dependencies for the operation is satisfied at other datacenters. Consider a put operation originated at site C, which introduced put-after-dependencies to updates originated at site B. When this put operation (now a put-after operation) is asynchronously replicated to site A at step 4, this put-after will block at site A until site A receives the dependent-upon updates originated at site B. And if site B is partitioned away from site A, the put-after operation originated at C will block at A. Although site C and A are connected the put operation will not be able to complete step 4 as long as B is disconnected from A. I think this is a subtle point, which many readers may not notice even after reading the paper a couple of times.

COPS-GT (Get Transactions) operation

Reading a set of dependent keys using a single-key get interface cannot ensure causal+ consistency, even though the data store itself is causal+ consistent. To illustrate the problem the paper gives a photo album example, where Alice first changes her album access control list (ACL) to "friends only", and then writes a new description of her travels and adds more photos to the album. A natural (but incorrect!) implementation of code to read Alice's album might (1) fetch the ACL, (2) check permissions, and (3) fetch the album description and photos. This approach contains a straightforward "time-to check-to-time-to-use" race condition: when Eve accesses the album, her get(ACL) might return the old ACL, which permitted anyone (including Eve) to read it, but her get(album contents) might return the "friends only" version.To address this issue, a two-round COPS-GT (Get Transactions) algorithm is introduced in the paper. "In the first round, the library issues concurrent get by version operations to the local cluster for each key the client listed in get transaction. These values, however, may not be consistent with one another, as in the example above. Each get by version operation returns a <value, version, deps> tuple, where deps is a list of keys and versions. The client library then examines every dependency entry <key, version>. The causal dependencies for that result are satisfied if either the client did not request the dependent key, or if it did, the version it retrieved was greater than or equal to the version in the dependency list. For all keys that are not satisfied, the library issues a second round of concurrent get by version operations. The version requested will be the newest version seen in any dependency list from the first round. These versions satisfy all causal dependencies from the first round."

The paper notes that COPS-GT comes with additional cost: compared to the regular version of COPS, COPS-GT is less efficient for certain workloads (e.g., write-heavy) and is less robust to network partitions and datacenter failures.

Concluding remarks

The paper is a long and dense read. While the model is formalized well, I wish some issues got better coverage in the paper. One problem I had with the paper is that just when I think the paper is going to deal with a hard problem, it backs out and starts talking about other peripheral issues. For example, in Section 3.4, the problems with log exchange-based serialization is not elaborated sufficiently for me; the paper skipped to discussing system design too quickly. In Section 4.1, when I thought the paper will be explaining composability of linearizability, the paper skipped to presenting the system components. (Here some papers by Herlihy are referenced saying that "linearizable systems can be implemented scalably by partitioning the keyspace into N linearizable partitions and having clients access each partition independently". But details are missing.)UPDATE:

So how big can these dependencies get? It turns out, the dependencies are tracked by the client library based on the client's history. It seems like the dependency list can grow very long as the client reads many different keys. As a result, one can end up with a pretty long list of dependencies: to every other key read by the client.

Instead, to keep dependencies sweet and short, we can use the Hybrid Logical Clocks (HLC). The loosely synchronized real-time component of HLC would help in truncating the dependency list. The logical clock component of HLC would help in maintaining this dependency list precisely even in the uncertainty region of loosely synchronized clocks.

Finally, the COPS client library solution also did not account for whether the client has backchannels. Using real-time windows can help account for the backchannels as well.

Related links

High Scalability post on COPS and followupCOPS project page

Q&A for COPS

Dave's post clarifying some issues

Comments