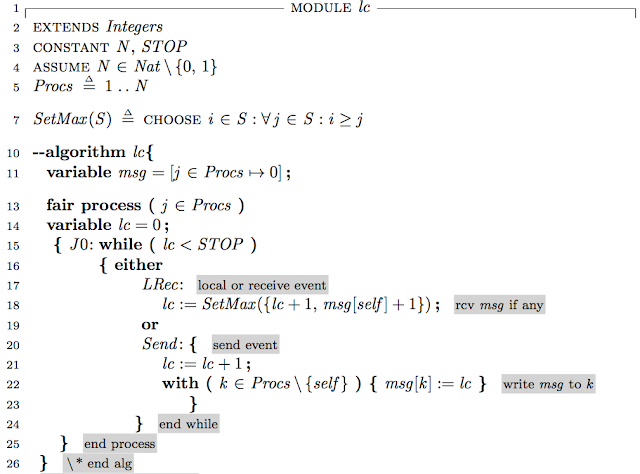

Paxos derived

Lamport's fault-intolerant state machine replication algorithm In 1978, Lamport published his classic "Time, Clocks, and the Ordering of Events in a Distributed System". As an application of logical clocks, he presented a distributed replicated state machine algorithm (and then he instantiated that algorithm to solve mutual exclusion as an example). Lamport complains that no one seemed to be aware of the distributed replicated state machine algorithm introduced in the paper: "This is my most often cited paper. Many computer scientists claim to have read it. But I have rarely encountered anyone who was aware that the paper said anything about state machines. People seem to think that it is about either the causality relation on events in a distributed system, or the distributed mutual exclusion problem. People have insisted that there is nothing about state machines in the paper. I’ve even had to go back and reread it to convince myself that I really did remember...