I Can’t Believe It’s Not Causal! Scalable Causal Consistency with No Slowdown Cascades

I recently came across the Occult paper (NSDI'17) during my series on "The Use of Time in Distributed Databases." I had high expectations, but my in-depth reading surfaced significant concerns about its contributions and claims. Let me share my analysis, as there are still many valuable lessons to learn from Occult about causality maintenance and distributed systems design.

The Core Value Proposition

Occult (Observable Causal Consistency Using Lossy Timestamps) positions itself as a breakthrough in handling causal consistency at scale. The paper's key claim is that it's "the first scalable, geo-replicated data store that provides causal consistency without slowdown cascades."

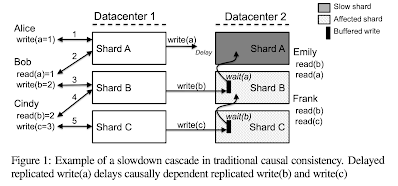

The problem they address is illustrated in Figure 1, where a slow/failed shard A (with delayed replication from master to secondary) can create cascading delays across other shards (B and C) due to dependency-waiting during write replication. This is what the paper means by "slowdown cascades". Occult's solution shifts this write-blocking to read-blocking. In other words, Occult eliminates the dependency-blocking for write-replication, and instead for read-serving, it waits-on read operations from shards that are lagging behind to ensure they appear consistent with what a user has already seen to provide causal consistency.

Questionable Premises

The paper presents dependency-blocking across shards for writes as a common problem, yet I struggle to identify any production systems that implement causal consistency (or any stronger consistency) this way. The cited examples are all academic systems like COPS, not real-world databases.

More importantly, the paper's claim of being "first" overlooks many existing solutions. Spanner (2012) had already demonstrated how to handle this challenge effectively using synchronized clocks, achieving even stronger consistency guarantees (strict-serializability) without slowdown cascades. Spanner already does what Occult proposes to do: it shifts write-blocking to read-blocking, and also uses synchronized clocks to help for reads.

The paper acknowledges Spanner only briefly in related work, noting its "heavier-weight mechanisms" and maybe as a result it "aborting more often" - but this comparison feels incomplete given Spanner's superior consistency model and production-proven success.

The Secondary Reads Trade-off

A potential justification for Occult's approach is enabling reads from nearby secondaries without client stickiness. However, the paper doesn't analyze this trade-off at all. Why not just read from the masters? When are secondary reads sufficiently beneficial to justify the added complexity? The paper itself notes in the introduction that inconsistency at secondaries is extremely rare - citing Facebook's study showing fewer than six out of every million reads violating causal consistency even in an eventually-consistent data store.

In examining potential justifications for secondary reads, I see only two viable scenarios. First, secondary reads might help when the primary is CPU-constrained - but this only applies under very limited circumstances, typically with the smallest available VM instances, which is unlikely for production systems. Second, there's the latency consideration: while cross-availability-zone latencies wouldn't justify secondary reads in this case, cross-region latencies might make them worthwhile for single-key operations. However, even this advantage diminishes for transactional reads within general transactions, where reading from masters is more sensible to avoid transaction aborts due to conflicts, which would waste all the work done as part of the transaction.

The Client Session Problem

The paper's handling of client sessions (or rather the lack thereof) reveals another limitation. Their example (from the conference presentation) wholesale couples unrelated operations - like linking a social media post to an academic document share - into the same dependency chain. Modern systems like MongoDB's causal consistency tokens (introduced in 2017, the same year) provide a better approach to session management.

Comparing Occult with Spanner reveals some interesting nuances. While Spanner can read from secondaries, it requires them to catch up to the current time (T_now). Occult takes a different approach by maintaining client causal shardstamps, allowing reads from secondaries without waiting for T_now synchronization. This theoretically enables causal consistency using earlier clock values than Spanner's current-time requirement.

However, this theoretical advantage comes with significant practical concerns, as we mentioned above in the secondary reads tradeoff. Occult shifts substantial complexity to the client side, but the paper inadequately addresses the overhead and coordination requirements this imposes. The feasibility of expecting clients (which are typically frontend web proxies in the datacenter) to handle such sophisticated coordination with servers remains questionable.

The Fatal Flaw

The most concerning aspect about Occult emerges in Section 6 on fault tolerance. The paper reveals that correctness under crash failures requires synchronous replication of the master using Paxos or similar protocols - before the "asynchronous" replication to secondaries. Say what!? This requirement fundamentally undermines the system's claimed benefits from asynchronous replication.

Let me quote from the paper. "Occult exhibits a vulnerability window during which writes executed at the master may not yet have been replicated to slaves and may be lost if the master crashes. These missing writes may cause subsequent client requests to fail: if a client c’s write to object o is lost, c cannot read o without violating causality. This scenario is common to all causal systems for which clients do not share fate with the servers to which they write.

Occult's client-centric approach to causal consistency, however, creates another dangerous scenario: as datacenters are not themselves causally consistent, writes can be replicated out of order. A write y that is dependent on a write x can be replicated to another datacenter despite the loss of x, preventing any subsequent client from reading both x and y.

Master failures can be handled using well-known techniques: individual machine failures within a datacenter can be handled by replicating the master locally using chain-replication or Paxos, before replicating asynchronously to other replicas."

Unfortunately, the implementation ignores fault-tolerance and the evaluation omits crash scenarios entirely, focusing only on node slowdown. This significant limitation isn't adequately addressed in the paper's discussion, and is mentioned in one paragraph of the fault-tolerance section.

Learnings

Despite these criticisms, studying this paper has been valuable. It prompts important discussions about:

- Trade-offs in causality maintenance

- The role of synchronized clocks in distributed systems

- The importance of evaluating academic proposals against real-world requirements

For those interested in learning more, I recommend watching the conference presentation, which provides an excellent explanation of the protocol mechanics.

The protocol

At its core, Occult builds upon vector clocks with some key modifications. Servers attach vector-timestamps to objects and track shard states using "shardstamps", and clients also maintain vector-timestamps to keep a tab on their interaction with the servers. Like vector clocks, shardstamp updates occur through pairwise maximum operations between corresponding entries.

This approach works straightforwardly for single-key updates but becomes more complex for transactions, where a commit timestamp must be chosen and applied consistently across all objects involved. Occult implements transactions using Optimistic Concurrency Control (OCC), but with specific validation requirements. The validation phase must verify two critical properties: first, that the transaction's read timestamp represents a consistent cut across the system, and second, that no conflicting updates occurred before commit. Transaction atomicity is preserved by making writes causally dependent, ensuring clients see either all or none of a transaction's writes - all without introducing write delays that could trigger slowdown cascades.

The protocol employs several optimizations. To manage timestamp overhead, it employs both structural and temporal compression techniques. It also leverages loosely synchronized clocks to avoid spurious dependencies between shards, as I discuss below. A key contribution is PC-PSI (Per-Client Parallel Snapshot Isolation), a relaxed version of PSI that requires total ordering only within client sessions rather than globally. This modification enables more efficient transaction processing while maintaining essential consistency guarantees.

Discussion on the use of synchronized clocks

A critical aspect of Occult is the requirement for monotonic clocks - clock regression would break causality guarantees for reads. While Lamport logical clocks could provide this monotonicity, synchronized physical clocks serve a more sophisticated purpose: they prevent artificial waiting periods caused by structural compression of timestamps. The compression maps timestamps to the same entry in the compressed shardstamp using modulo n operations.

The paper illustrates this problem in Section 5 with a clear example: when two shards (i and j) map to the same compressed entry and have vastly different shardstamps (100 and 1000), a client writing to j would fail the consistency check when reading from a slave of i until i has received at least 1000 writes. If shard i never reaches 1000 writes, the client must perpetually failover to reading from i's master shard. Rather than requiring explicit coordination between shards to solve this problem, Occult leverages loosely synchronized physical clocks to bound these differences naturally. This guarantees that shardstamps differ by no more than the relative offset between their clocks, independent of the write rate on different master shards.

This approach resonates with my earlier work on Hybrid Logical Clocks (2013), where we argued for combining synchronized physical clocks with Lamport clock-based causality tracking. The effectiveness of this strategy is demonstrated dramatically in Occult's results - they achieve compression from 16,000 timestamps down to just 5 timestamps through synchronized clocks.

Occult's use of synchronized clocks for causality compression ties into our broader theme about the value of shared temporal reference frames in distributed systems. This connection between physical time and distributed system efficiency deserves deeper exploration.

Comments