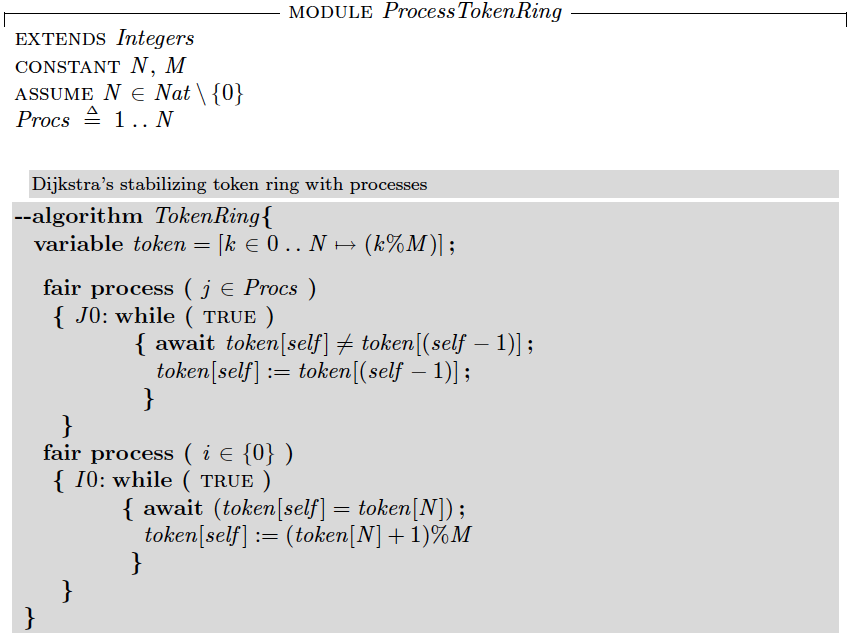

Dijkstra's stabilizing token ring algorithm

One of the classical algorithms I teach in my distributed systems class is Dijkstra's stabilizing token ring algorithm. This algorithm has started the self-stabilization field as a subfield of fault-tolerance. And, it still receives interest even after 40 years. There has been probably hundreds of self-stabilization papers that revisits Dijkstra's stabilizing token ring algorithm as part of a solution or as part of a case study. This algorithm never gets old for me as well. I still enjoy talking about this algorithm in class and thinking about it once in a while. I guess this is because it is a very elegant algorithm. Linked is Dijkstra's original paper introducing the algorithm. This paper also includes two variants of the stabilizing token ring algorithm, 3-state and 4-state token ring algorithms. Dijkstra would later do a followup writeup, titled, "A belated proof of self-stabilization" , where he gave a proof of stabilization for 3-state token ring progra...